Popular posts

Write to think.

Publish to connect.

AI can generate a thousand articles a minute. But it can't do your thinking for you. Hashnode is a community of builders, engineers, and tech leaders who blog to sharpen their ideas, share what they've learned, and grow alongside people who care about the craft.

Your blog is your reputation — start building it.

New & popular

How We Built a No-Code Landing Page Editor That Ships Static Pages in Minutes

5h ago · 6 min read · How We Built a No-Code Landing Page Editor That Ships Static Pages in Minutes We got tired of changing hex codes for a living. So we automated ourselves out of the job. The Old Way (Pain) Every "smal

Join discussion

Connecting frontend and backend in javascript | Fullstack Proxy and CORS

1h ago · 4 min read · Connecting a React frontend with an Express backend is not complicated—it’s about following a few solid practices: Keep API routes structured (/api/...) Expect CORS issues in development Use Vite p

Join discussion

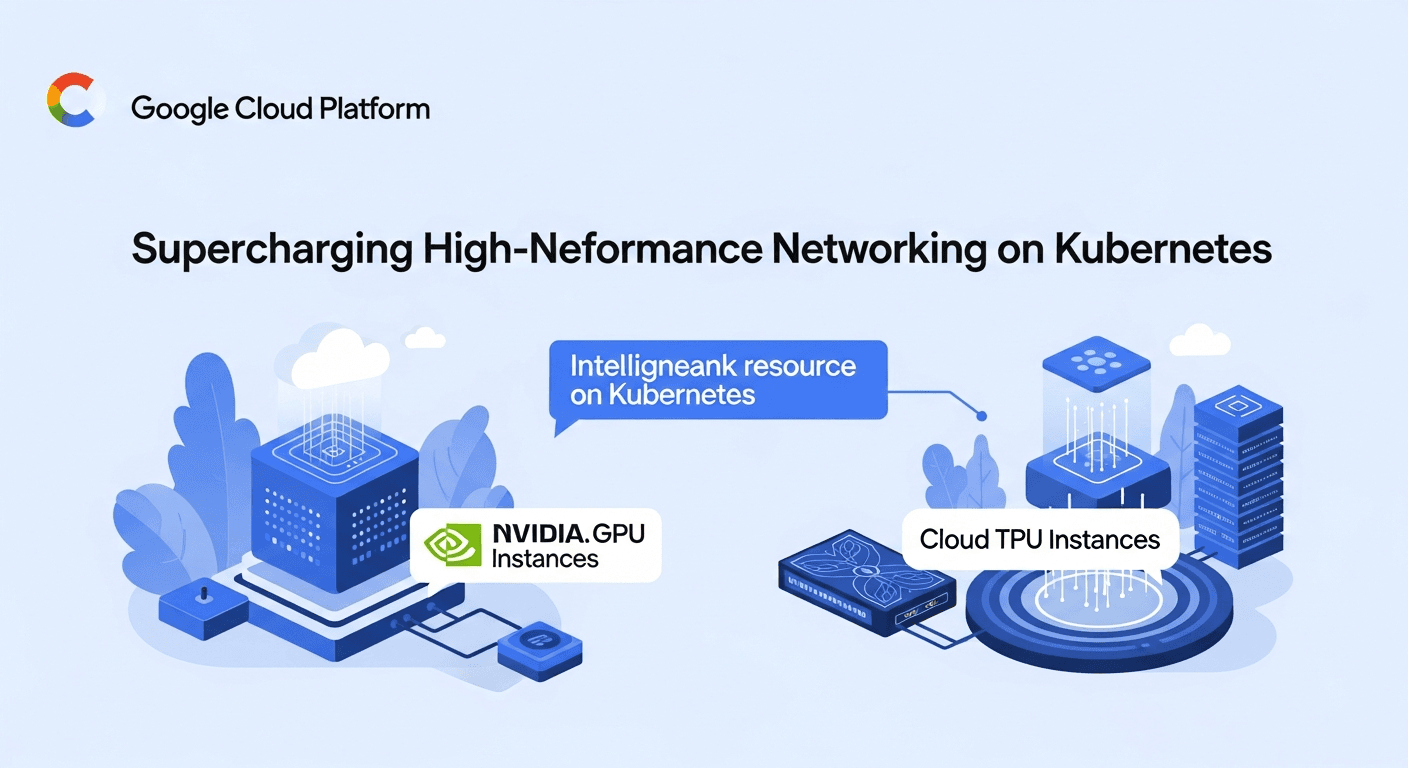

GKE Managed DRANET is GA: Supercharging High-Performance Networking on Kubernetes

10h ago · 3 min read · If you've ever tried to run some serious AI or machine learning workloads on Kubernetes, you know networking can be a real pain point. Moving massive datasets around, especially when you're dealing wi

Join discussion

Understanding Pathways: How Google Scales to Thousands of TPUs (WIP)

8h ago · 14 min read · Photo: Vincent Tjeng Jeff Dean presented the Pathways vision back in 2021: train a single large model that can do millions of things. At the time, ChatGPT didn't exist yet, and this idea felt genuinel

Join discussion

The AI Security Inflection Point: How Enterprises Must Defend Against 2026's Most Dangerous Threat L

34m ago · 13 min read · The AI Security Inflection Point: How Enterprises Must Defend Against 2026's Most Dangerous Threat Landscape The threat intelligence community has a phrase for what's happening right now: the "AI-fication of cyberthreats." It refers not just to attac...

Join discussion