Since the first time I was taught the Poisson and Exponential distributions I have been wondering how to apply them to model a real world scenario such as arrivals of people in a shop. Last week I was in a big shopping center and I thought that was the perfect time to gather some data so I chose a shop (randomly) and started recording on a piece of paper how many people arrived and their inter-arrival time.

I went through this process for an hour, in the middle of the afternoon (around 4 to 5pm) during a week day where the influx of customers should be approximately uniform, or so I am told. Here you can download in .txt format, the data I gathered. Note that even though the file is a text file, it is arranged as a .csv so that R can easily detect data.

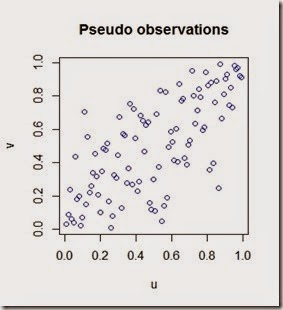

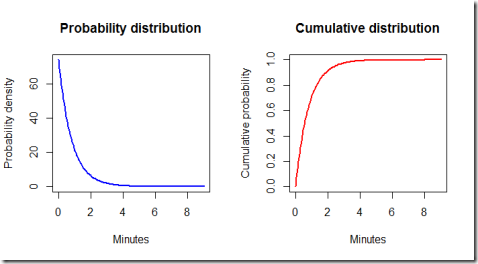

What is an inter-arrival time? It is the interval of time between each arrival. Assuming that the arrivals are independent, their distribution is exponential. This assumption is further confirmed below by the blue histogram of the observations.

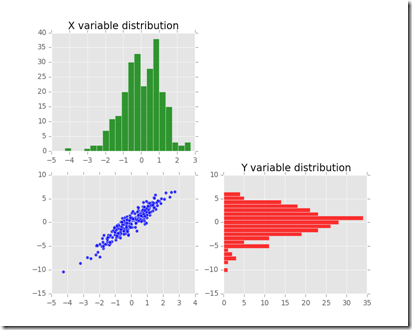

Summary of gathered data:

- I observed the arrival of customer for 58.73 minutes (around an hour)

- During that time 74 “shopping groups” entered the shop. “A shopping group” here is a broad label for a group of 1, 2, 3 or 4 actual customers. The red histograms describes the phenomena in greater detail.

As you can see it seems reasonable to assume that inter-arrival times are exponentially distributed

The red histogram above clearly shows that the majority of “shopping groups” is composed by either a single customer or a couple of customers. Groups of 3 or 4 people are less likely to appear according to the observed data. A good estimate of the probability of the number of people in a shopping group is the relative frequency of the observations.

The following code produces the two plots above

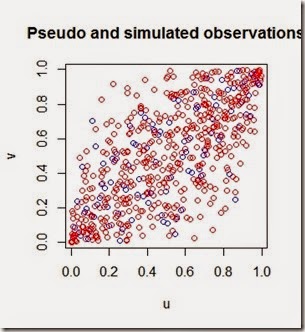

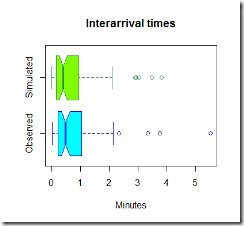

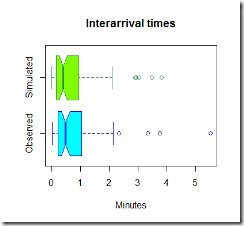

Now that we have uploaded the data, it is time to simulate some observations and compare them to the real one. Below in green is the simulated data.

The code used to simulate the data is here below

Now, the graphs above are pretty, but they are not much use, we need to compare the two sets of graphs to get an idea if our model is fine for this simple task.

It looks like our model tends to overestimate the number of arrivals in the 0-0.5 minutes section, however this is just one simulation and the average arrival time is close to what we expected using this model. It seems reasonable to use this distribution to estimate the inter-arrival times and the number of people arrived in the shop.

The R-code

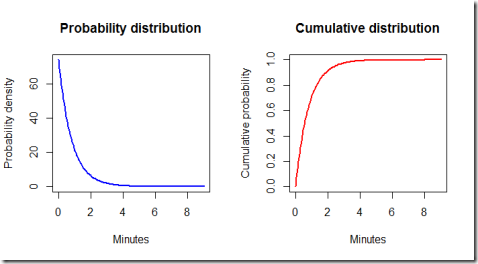

Finally, let's have a look at the ideal exponential distribution given the estimated parameters. Note that some adjustments are necessary because our model is set so that the simulated times are hours, but for practical purposes it is better to work with minutes. Furthermore, by using the exponential distribution formulas we can calculate some probabilities.

By running the code we find out that the probability of the waiting time being less than 3 minutes is 0.975 meaning that it is very likely that one customer group will show up in less than three minutes.

Conclusions.

This model is very basic and simple, both to fit and to run, therefore is very fast to implement. It can be useful to optimize the number of personnel in the store and their function, according to the expected inflow of customers and the average time needed to help the current customers. The hypothesis seem reasonable, given the time of the day considered and provided that the shop does not make some promotion or discount or any other event that could suggest a different behaviour of customers (for instance when there is a discount, it is likely that customers might tell each other to visit the store). For different times of the day, different parameters could be estimated by gathering more data and fitting different exponential distributions.

![clip_image002[7] clip_image002[7]](https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEipxFAL3jCQf8iBYduwZIC0Vb4S4xskS8il2qddE8-QaW3ilQBjXySqe36EASp7rytYVgEj7zoydBgb0RPrL2PXL6Ocq6CmEW5D-UTKLr94lefJruDC1z6rXrXV7VakZqPm2DOCNMKCIRs/?imgmax=800)